Overview

This is a small exercise I explored with my friend Shengxi in order to learn the basics of designing Voice User Interfaces. We followed the process recommended by Amazon to design the voice interaction.

Problem

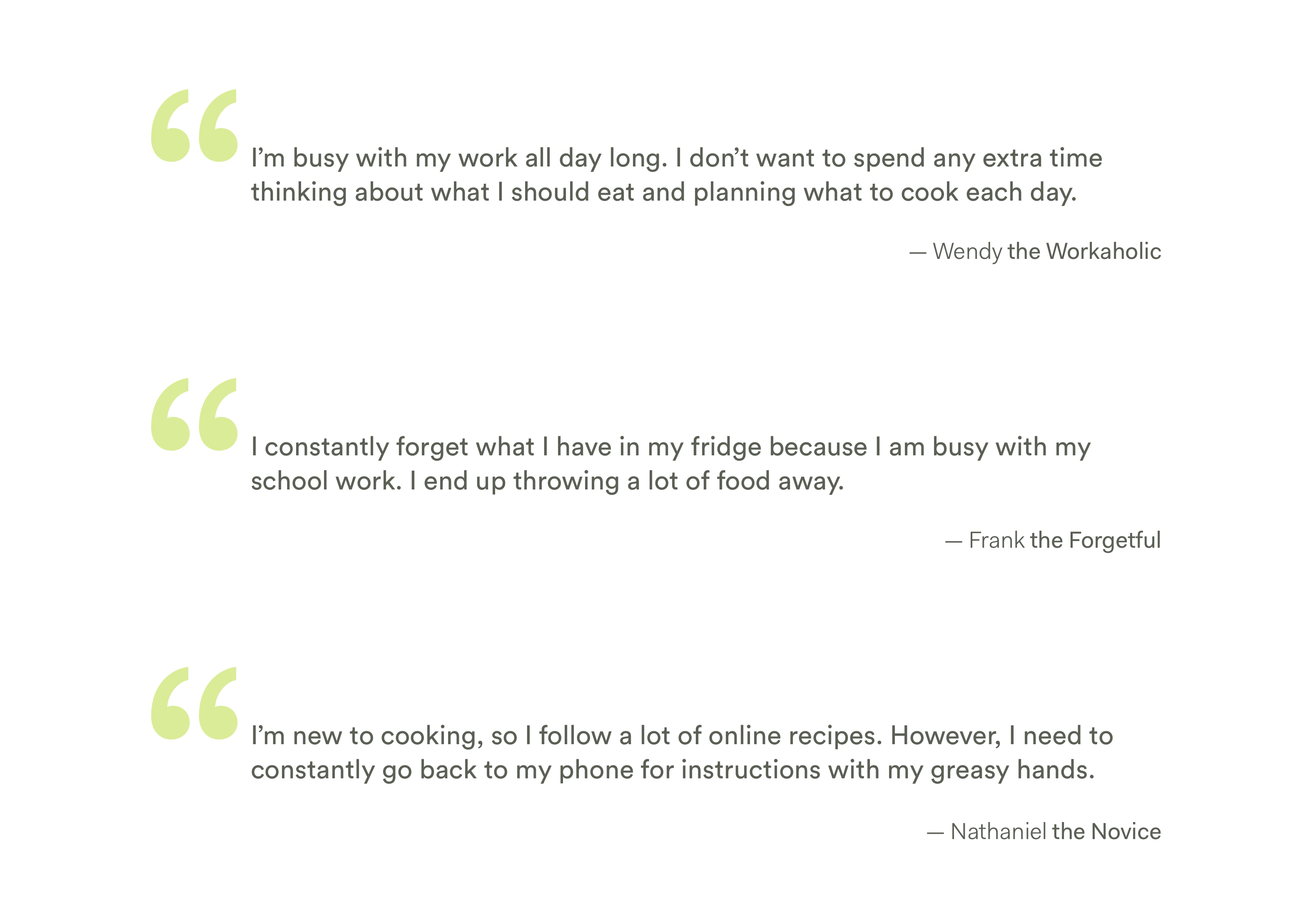

College students and young professionals, with their busy schedules, usually find cooking and organizing their fridges time-consuming. Some usually forget what they have in the fridge and end up wasting food; others hate spending time trying to figure out what to cook for each meal with the available ingredients.

Solution

We present Cook Fresh, an Alexa skill concept that enables Alexa to suggest recipes based on available ingredients in the fridge, remind users of expiring food, order missing ingredients from Amazon Fresh, and provide step-by-step cooking guidance.

Several products from Samsung and Whirlpool with similiar ideas were later presented at CES 2018, demonstrating the feasibility of our concept.

My Role

Voice User Interface Designer, UX Designer

Timeline

Two Days (Dec. 7 - Dec. 8, 2017)

Team Members

Tony Jin, Shengxi Wu

Tools

Sayspring (Voice Interface Prototyping Tool)

My Contribution

I came up with the initial design idea and finalized it with the help of Shengxi. I conducted secondary research, designed the end-to-end interaction flow, and prototyped the product in Sayspring.

Design Outcome

I no longer have access to my intial prototype in Sayspring since its acquisition by Adobe. I will create another prototype and provide its link soon. Please refer to the process below if you're interested in the design.

Problem Discovery

User Needs

As in design for any other platforms, we started by talking with people, identifying their problems, and delineating user needs. For this short design exercise, we quickly talked with people around us, and categorized their needs into three large categories: quickly get recipe suggestions based on available ingredients, reminders about food expiration, and hands-free cooking instructions.

Why Voice?

Voice is a Natural Way of Communication

It is quick and intuitive for users to simply ask “what should I cook for dinner” instead of navigating through a graphical UI and learn how the information is structured. In this way, users won’t need to adapt to the technology and will get quick suggestions and instructions from the skill.

Voice User Interface Creates an Eyes and Hands-Free Experience

Voice UI doesn’t require users to touch or look at any external device. This is especially helpful in the cooking context, in which users’ eyes and hands are occupied.

Design

Thinking Boldly

Since the primary purpose of this exercise is to get familiar with Voice Interface design, we decided to think bold and imagine the future state of Voice Interface and Smart Home Technologies.

One such assumption we made is that future smart fridges would be able to recognize what's in them, and whether the food/ingredients are about to expire. This technology can be realized by adding a tag to each product we buy to encode information about product name & expiration date, or by using computer vision algorithms to detect products within the fridge and estimate whether they are about to expire. Similar ideas were later shown at CES 2018, demonstrating the feasibility of our idea.

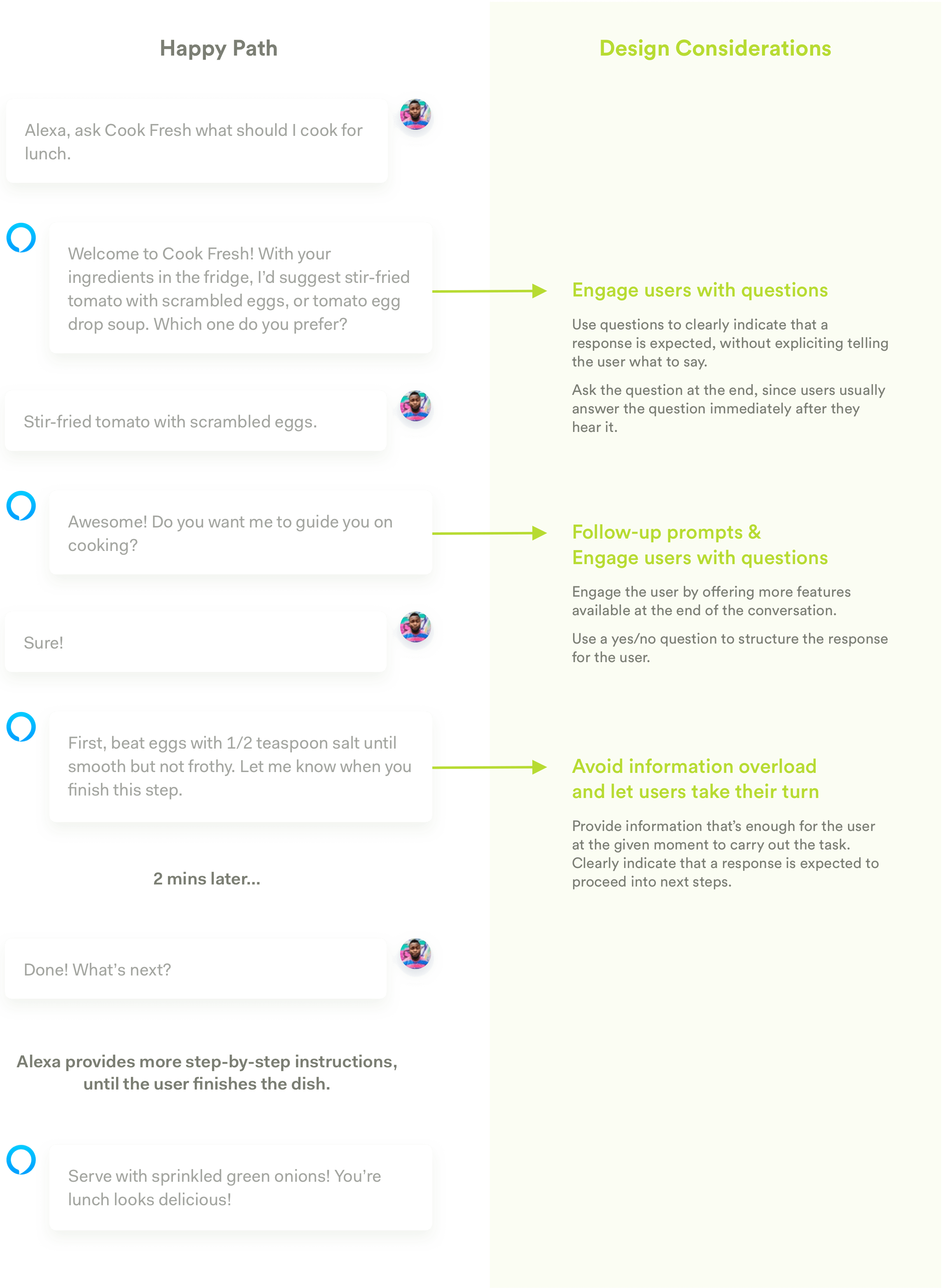

The Happy Path

We followed Amazon's VUI Design guidelines and started with designing "the Happy Path." For each of Cook Fresh's response, we referred back to the design guidelines to make sure that it sounds natural & concise, that it can provide enough information to successfully engage the user in a conversation, and that it can guide the user's response without explicitly telling him/her what to say.

Synonyms & Combo-Breakers

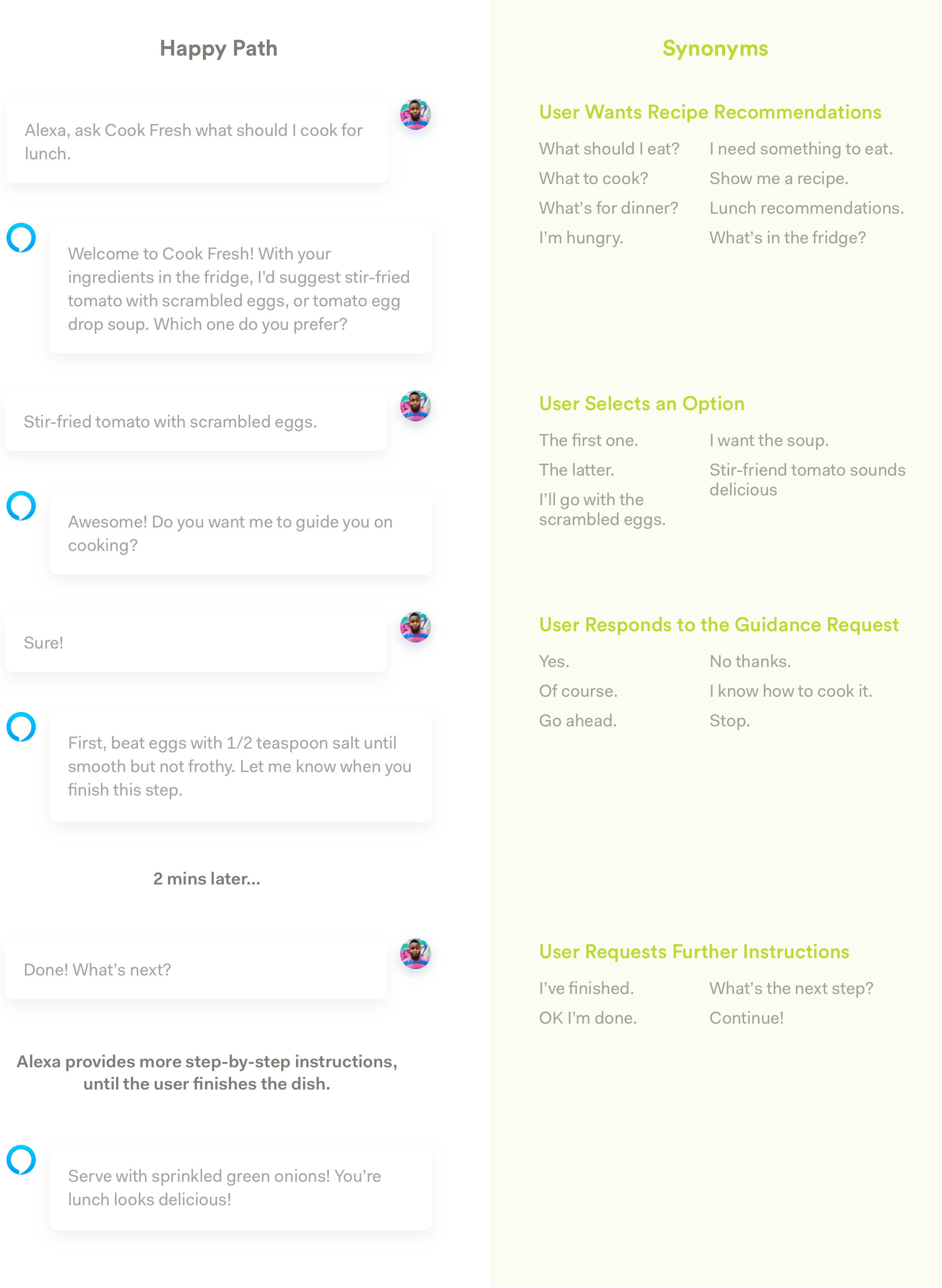

We then built on the happy path and started brainstorming how the users might respond differently from our planned path. To come up with all these possibilities, we conducted a role play with several of our friends to gather possible responses. We then organized them into 2 groups according to Amazon's guideline, synonyms and combo-breakers.

Synonyms

A lot of times, users will express the same ideas we expect without speaking the exact same words we have. Therefore, to create the prototype, we came up with synonyms for each input, and added them into our prototype.

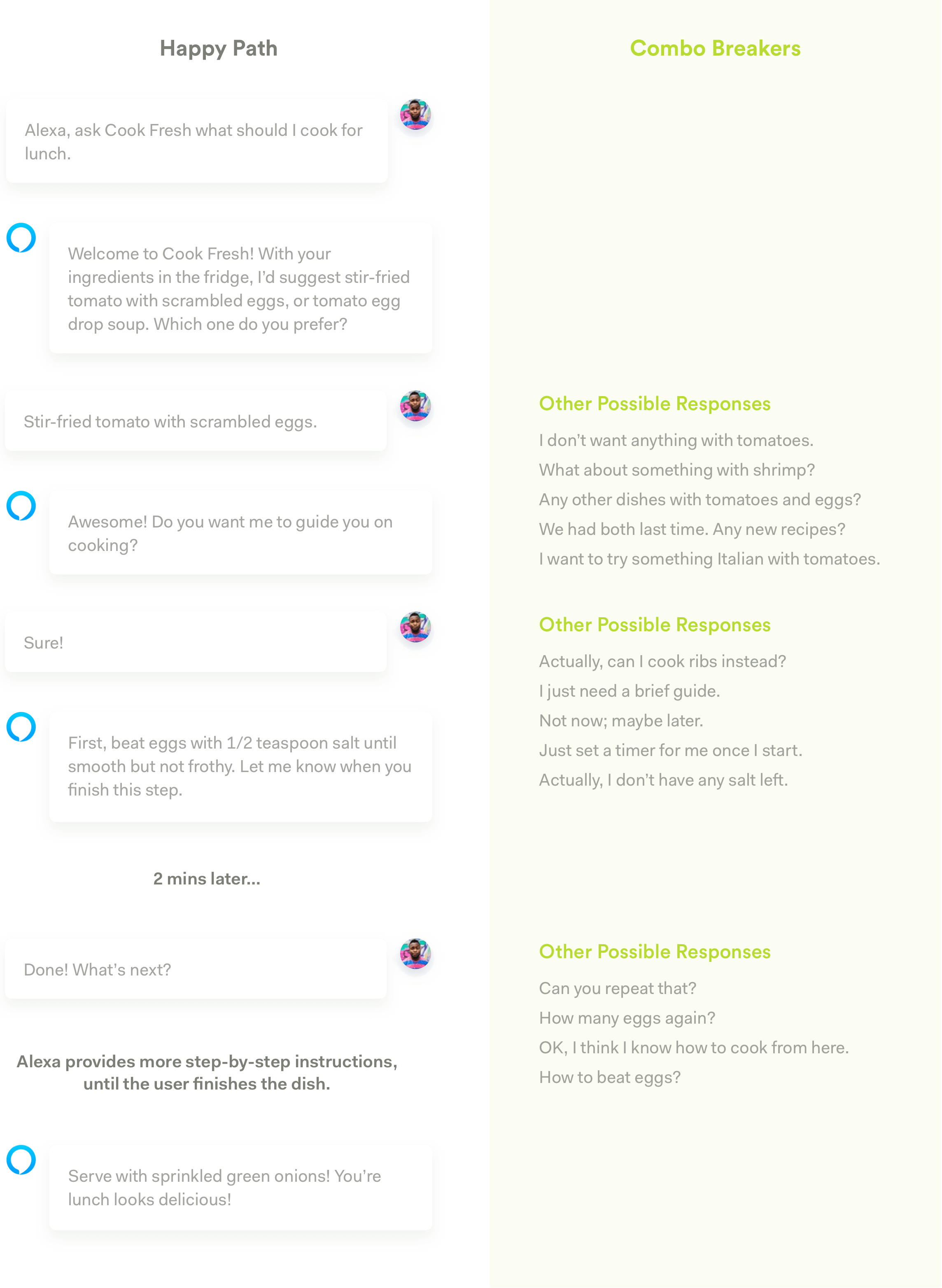

Combo-Breakers

Other times, users might not strictly follow the happy path to answer the questions. They might reply with another question or come up with an option that is not expected. Therefore, we listed a couple of such "combo-breakers" to enable our skill to switch context when necessary to maintain the conversation.

These synonyms and combo-breakers are not exhaustive, nor were we able to incorporate every situation into our design. It was just a thought exercise that pushed us to think more about how to design for voice, with all the unexpected input from users.

Refining Interactions

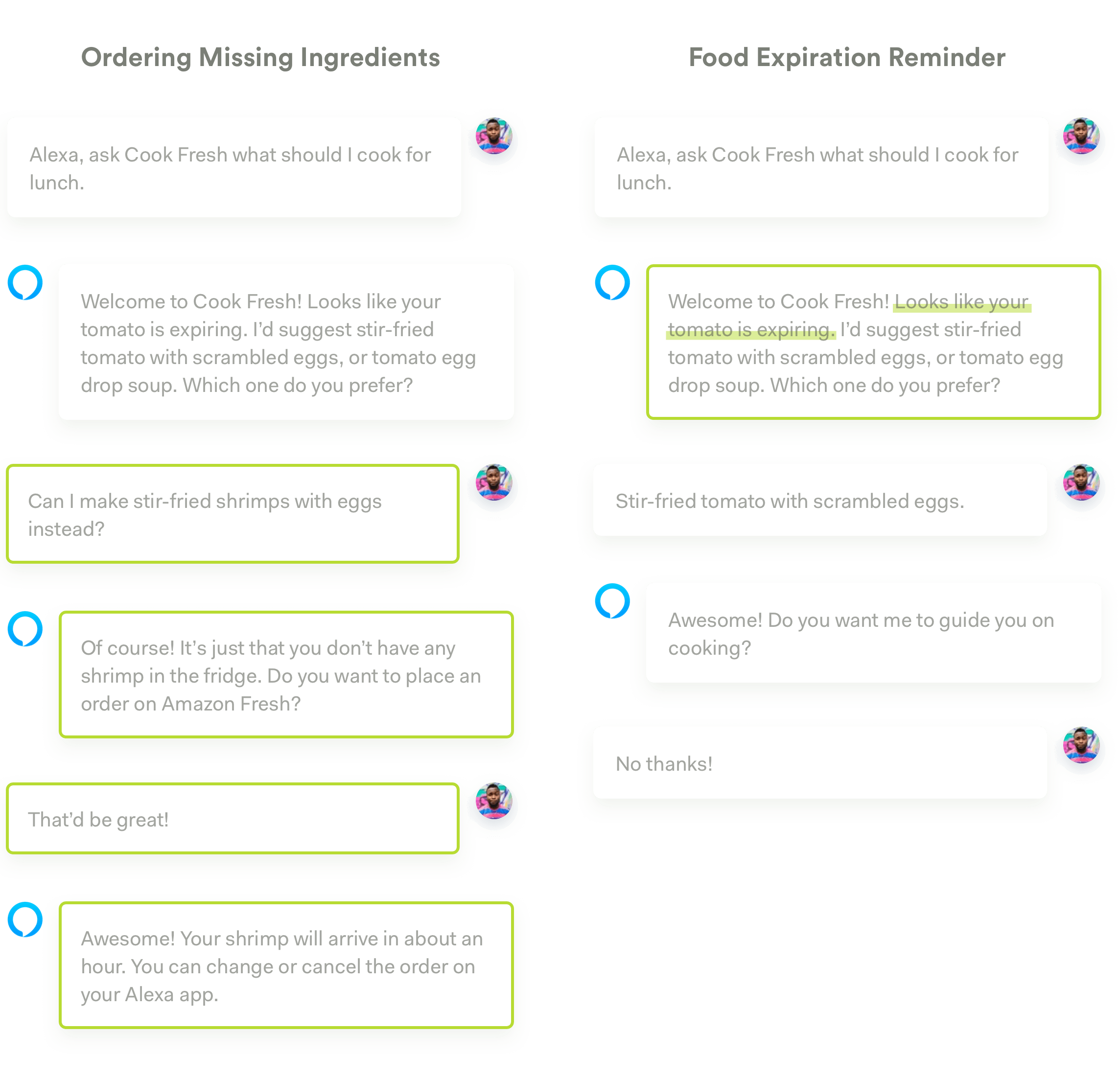

We then thought about different use cases based on the combo-breakers and our initial user needs. We refined our scenario by adding the following two paths:

Note that to enable the function of ordering missing ingredients, users will be required to link the skill to their Amazon Fresh account, and give the skill access to their mailing address. They might also need to manually select the ingredient they want the first time they use this function, so that Cook Fresh can remember their preference and order the same ingredient the next time it is needed.

Future Steps

I couldn't do everything within the short time that I finished this exercise. There are a lot more things to consider and explore if I had more time.

First Use Experience

A lot of the interactions we prototyped are based on the assumption that users have already gone through the onboarding process and have linked their smart fridge and their Amazon account with the skill. That experience, whether it be through voice or through the Alexa app, requires further work. The initial set up experience should also inform the users about what the skill is capable of doing.

Personalized Experience for Long-Term Users

One feature we prototyped earlier was directly helping users order an ingredient on Amazon Fresh and have it delivered to the user's address. By learning the user's preference over time, we are able to help users make such orders without having to confirm with them about their preferences every time. For long-term users, Cook Fresh should be able to learn a lot about their dietary preferences over time, and provide experience tailored to these preferences (e.g. what recipes to recommend at what time, what ingredients to order). I'll still need to work out these details if I had more time.

User Testing

Though we brought our prototype to some users to help us refine our design, more comprehensive user testing sessions are required to validate whether the design helps better solve our users' problems, and whether the interaction makes sense for a variety of users in different contexts.

Reflections

Challenges for Voice Interface Design

The reason why I started this exercise and enjoyed it so much is that there are unique challenges in VUI design that pushed me to think more about how to compensate for the limitations of voice communication through design. These challenges include:

High Expectations from Users & Technical Constraints

Voice is a natural form of interaction for people; it makes sense for them to learn to use a GUI, but they'll be frustrated if they're forced to learn how to talk. While users expect to talk freely with a digital assistant, current technologies do not support such free conversations--it is still hard for computers to understand the context and come up with an answer that always matches the user's expectations in a truly free conversation. Therefore, it is up to the designer to structure the digital assistant's words to elicit certain types of responses from the users that's easy to process technically, without explicitly telling the users what to do.

Non-Linear Interactions

GUI interactions are mostly linear; users need to navigate through a certain path in order to get to where they need to be. Voice interfaces, on the other hand, cannot and should not provide such constraints. Therefore, designers need to think about different responses and combo-breakers users might provide, and let the digital assistant be flexible given the user input.

Voice Contains Less Information

Human beings are able to process less auditory information compared to visual information. People can quickly browse through a list and choose an item, but if they listen to a list, they need to listen to the items one by one, and will likely forget about the previous information by the end. Therefore, when designing for voice, designers need to provide only the most necessary information at the moment, and let users to take their turn to make a choice, or to request further information.

Unchanging Design Principles

Despite all the differences between VUI design and GUI design, I was able to find common design principles that apply to both. For example, in both cases, designers need to provide just enough information to avoid information overload; we need to provide constraints to guide users through a certain path; we need to focus on the tasks users are trying to accomplish, think about their context, their needs, and provide solutions that best suit their needs within the context. Though the nature of different media & technologies requires us to pay special attention to certain principles (e.g. people can process much less auditory information compared to visual information, so we need to be even more concise when designing for audio), the underlying principles remain the same.

The Importance of User Testing

User testing seems to be even more important when designing for voice. Since voice interactions are more natural for users, there are simply more possiblities that users will not follow our predefined happy path and say something totally unexpected. As designers, it is crucial for us to test our dialog flows with our users to discover all those different possibilities and try to incorporate them into the design.